The 2025 Zach's Tech Blog Holiday Roundup

We made it through another year!

Merry Christmas, Happy New Year, Happy Hanukkah, and enjoy all of your other holidays as well! In between my quest to find the best hot buttered rum in New York City and traveling back to my hometown of Los Angeles, I’ve decided to revive the Zach’s Tech Blog holiday tradition of doing a little roundup of all of my favorite things I’ve written this year!

I started 2025 with about 700 subscribers. This year, we’ve more than doubled that, to over 1,600! We’ve had some hits as well, getting over 8,000 views on my most popular article this year. I’ve gotten coffee and drinks with multiple people I’ve met through this blog, I’ve been invited to speak at conferences, and overall, I’ve had an amazing year writing all these articles. And I wouldn’t be able to do it if there weren’t people reading!

So… thank you so much for reading for another year!

I really appreciate everybody who reads these articles, likes them, comments, or reaches out to tell me they enjoyed something I wrote. I read it all (really!) and seeing smart, cool, interesting people appreciate the work I do warms my heart. If you do feel inspired to message me on Twitter on LinkedIn, feel free to say hi!

Now on to my favorite articles of the year!

My Hottest Take

Most people think that large language models (LLMs) are pretty bad at writing Verilog, the special language we use to design digital chips. Unlike high-level programming languages like Python or JavaScript, the performance of a piece of Verilog code is highly dependent on the quality of the Verilog code itself, and LLMs generally write mediocre Verilog at best. There’s a reason why most startups using LLMs for chip design are building products for verifying chips, rather than writing Verilog.

I definitely agree that LLMs are a very, very long way away from writing the kind of high-quality Verilog that you’d find in the core mathematics engine of something like an Nvidia GPU. But most chips have a lot of subsystems that are acquired from third-party vendors, called “IP cores”. A large, modern chip will have a substantial part of its development budget allocated to IP cores. And a lot of these IP cores can fulfill their functions even if their implementation is mediocre. So I made a prediction:

We haven’t actually seen an LLM-powered IP company be founded yet, but Sphere Semi is basically doing this exact thing for analog chips and analog IP. They’re developing reinforcement-learning powered AI tools for analog chip design, but instead of selling those tools, they’re selling chips and IP cores that they develop in-house using those tools.

My Most Popular Article

Every chip designer hates the oligopoly that Cadence, Synopsys, and Siemens have on the specialized software we use to design chips. What’s surprising, though, is that nobody’s successfully disrupted this oligopoly; after all, Silicon Valley startups are really good at making software capable of disrupting legacy incumbents. Those in the know, however, are aware of the inter-company politics that keep startups from successfully selling chip design software.

Why is it so hard for startups to compete with Cadence?

If you talk to any chip designer, they’ll complain about Cadence’s chip design software. They’ll probably complain about Synopsys too, and maybe Mentor Graphics (which is now owned by Siemens, but everybody still calls them Mentor Graphics). These three companies have an oligopoly on chip design software, despite their software being slow, difficult to …

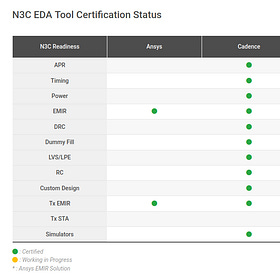

I think that a lot of non-chip-designers probably appreciated getting a peek into the weird world the politics behind the chip design software industry. I also tried to end on a hopeful note: there are some startups that might be able to disrupt the industry, and I’m rooting for them!

This article also got me a bit of flak because I suggested a political marriage of an EDA startup founder to Morris Chang’s daughter as a solution to the Cadence / Synopsys / Siemens oligopoly. Though when everybody is talking about how Taiwan is just like Arrakis from Dune, I don’t think a political marriage should be totally out of the question.

My Favorite Article

Just like last year, my favorite article is about side-channel attacks on AI chips. Secure AI hardware is a really interesting mix of the two biggest focuses I’ve had in my career and in my writing: AI accelerators, and secure hardware design. Unfortunately, a lot of people see secure and tamper-proof AI hardware as unnecessary. Those people are wrong:

Side channel attacks on AI chips are very real.

I’ve talked a lot about side-channel attacks on cryptographic systems. These sorts of attacks usually focus on compromising low-cost, commodity cryptographic hardware, like the chips you’d find in a credit card or a YubiKey. But more recently, industry leaders in the AI world, including OpenAI, have been calling for more focus on

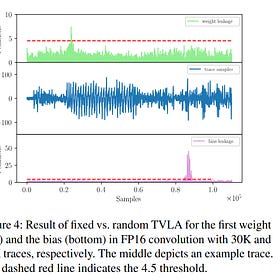

The BarraCUDA attack is an extremely important proof-of-concept for side-channel attacks on real-world AI systems. If you want to deploy an AI model on an edge device like a security camera, a drone, or a self-driving car, you have to assume that anybody with physical access to the device can steal your model weights. And if they have your model weights, they can find adversarial examples that make your model go haywire. For example, they could install special graffiti on stop signs that makes your self-driving model think it’s actually a different kind of sign.

On that slightly terrifying note, that’s all for this year! Happy holidays, thanks for reading, and I’ll catch you in the new year with some new articles!

- Zach from Zach’s Tech Blog