Crossbar’s new security chip isn’t actually secure.

Designing tamper-proof chips is hard.

Crossbar, Inc is a company that was founded to commercialize resistive random access memory (RRAM), an emerging nonvolatile memory technology that’s one of the candidates to replace eFlash in modern process nodes. In the past few years, they’ve been starting to focus on ReRAM’s unique security properties. Compared to flash memory, it’s more robust to laser voltage probing attacks, and it can be used as a physically unclonable function to generate stable, secure random keys inside the chip.

Licensing secure memory and PUF IP isn’t super lucrative, though, so Crossbar decided to vertically integrate and start designing their own security chips and hardware products that integrate those chips. But Crossbar didn’t want to design their chip in the way that most security chips are designed: closed-source and highly secretive. Instead, they worked with the legendary hardware hacker Bunnie Huang to partially open-source their chip design.1 This is really cool, and it’s exciting to see more companies embracing open-source silicon; you can go look at all the RTL right here.

But there’s one issue with making your silicon open-source: everybody can see your mistakes. That includes people like me, who have been designing highly secure hardware for years. And Crossbar’s implementation of masked AES has a significant flaw that makes it fundamentally insecure against side-channel attacks. Today, we’re going to be looking at what went wrong, and how they could have done better.

What makes a chip side-channel secure?

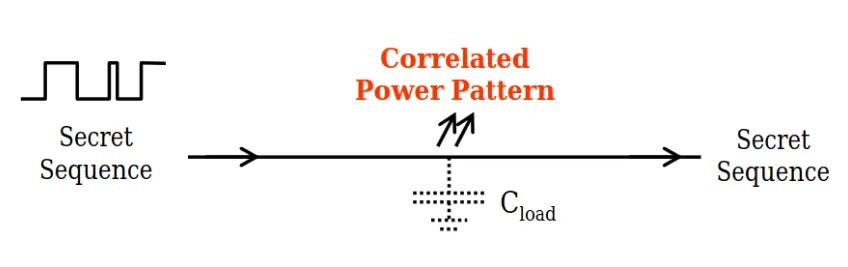

Whenever the value on a wire in a chip switches from a 0 to a 1 or vice-versa, it consumes a small amount of power. If you’re trying to design a secure chip that’s processing sensitive cryptographic keys, this can be a problem. For example, let’s say you want to send a key from one part of a chip to another along a bus. The amount of power consumed by that transaction is going to be related to the value of that cryptographic key. If an attacker measures the power consumption of this chip over a lot of transactions, they can reconstruct the value of the secret key. This is called a power analysis side-channel attack.

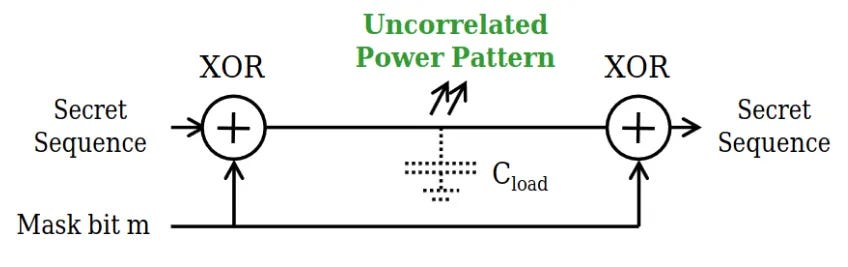

Luckily, there are ways to prevent these side-channels. By XOR-ing the key data with random data, the power consumption of sending data along wires becomes randomized.

Unfortunately, it’s harder to mask actual computations this way. If we want to implement a masked AND gate, we can’t just XOR each input with a mask and then XOR the output by the same mask to recover the desired output value. We have to be more clever.

In 2003, Elena Trichina developed an implementation of an AND gate that was supposed to be secure against side channel attacks. Specifically, the Trichina AND gate was “1-probe secure”: the value of the secret key never appeared directly on a wire in the chip, so a single probe connected to any wire on the chip could not recover it. That (roughly) meant that the power consumption of the chip wouldn’t be proportional to the secret key.2 This notion is important: for a chip to be secure against power analysis, the real value of the secret key should never appear directly on a wire.

Crossbar’s implementation is similar to Elena Trichina’s design, with some minor improvements. Unfortunately, this kind of design was shown to be insecure as early as 2005.

Why isn’t Crossbar’s implementation secure?

The physical logic gates and wires that make up a digital circuit all have different amounts of propagation delay through them; data doesn’t flow through these circuits instantly. This can result in behavior called “glitches”, where intermediate values in a digital circuit transition from 0 to 1 and back multiple times during a single cycle as values propagate through the logic. In practice, glitches account for a significant portion of a chip’s power consumption… so they should also contribute to side-channel leakage.

In early 2005, a paper confirmed that these sorts of glitches actually do cause side-channel leakage. By late 2005, glitching power consumption had been used to compromise masked AES implementations in practice.

These glitching attacks work because, even when the value of the secret key is never exposed as the final state of a wire, it can become exposed as the intermediate state of a wire during a glitching event. This has the effect of compromising 1-probe security, which means that the power consumption of the chip starts to be correlated to the value of the secret key. This means that Crossbar’s chip isn’t actually secure against power analysis attacks.

There are various modern techniques that hardware designers use to implement side-channel secure algorithms even in the presence of glitches. The most common implementation I’ve seen in industry is domain-oriented masking (DOM), which inserts register stages into a design to force signals to arrive in a certain order and prevent glitches that would leak data. Applying DOM to a circuit increases its latency, but overall, it’s a fairly resource-efficient technique for implementing a masked algorithm. Unfortunately, Crossbar didn’t decide to leverage DOM or a similar, glitch-secure masking scheme in their design.

I have to give kudos to Crossbar for open-sourcing their RTL for this chip. So much of the silicon we rely on every day is closed source -- especially security chips. But open-source security silicon poses some challenges. This isn’t like software, where bugs can be fixed with a simple update. Crossbar is putting these chips, which have clear security flaws, into real products, and expecting people to use them to store their passkeys and custody their cryptocurrency. I respect Crossbar for trying to make open-source silicon, but they’re lacking the collaborative spirit that makes open-source development work so effectively.

On open-source silicon.

The right way to build open-source silicon isn’t just to take your design and publish the RTL online. It’s important to collaborate with the broader open-source community, which is aware of these sorts of attacks and how to prevent them. OpenTitan has a side-channel secure AES implementation that leverages domain-oriented masking. On the topic of a fully combinational masked S-box, like the one Crossbar is using, they say this (emphasis mine):

Alternatively, the two original versions of the masked Canright S-Box can be chosen as proposed by Canright and Batina: “A very compact “perfectly masked” S-Box for AES (corrected)“. These are fully combinational (one S-Box evaluation every cycle) and have lower area footprint, but they are significantly less resistant to SCA. They are mainly included for reference but their usage is discouraged due to potential vulnerabilities to the correlation-enhanced collision attack as described by Moradi et al.: “Correlation-Enhanced Power Analysis Collision Attack”.

If Crossbar had leveraged OpenTitan’s DOM AES implementation, not only would they have a more secure implementation, but it would serve as additional silicon proof of an agreed-upon implementation that’s gathering support among many different open-source silicon developers. Instead, Crossbar developed their own implementation, with flaws, and released it. Again, I respect them for making their silicon open source, but I hope open-source silicon developers in the future work more closely with the open-source community to ensure their designs are secure.

And one last note before I go: I would discourage any readers from buying any Crossbar PHSM devices due to this security flaw.

Bunnie didn’t work on the cryptographic hardware accelerators, and this post is not a critique of him or any of his work on the Baochip-1x project. It is, however, a critique of Crossbar, because a company selling security chips should not be making this mistake.

The d-probing model and how it relates to power side-channels is way beyond the scope of this article. Another time!